TL;DR

- AI search has no 'Position 1' — there is only cited or not cited

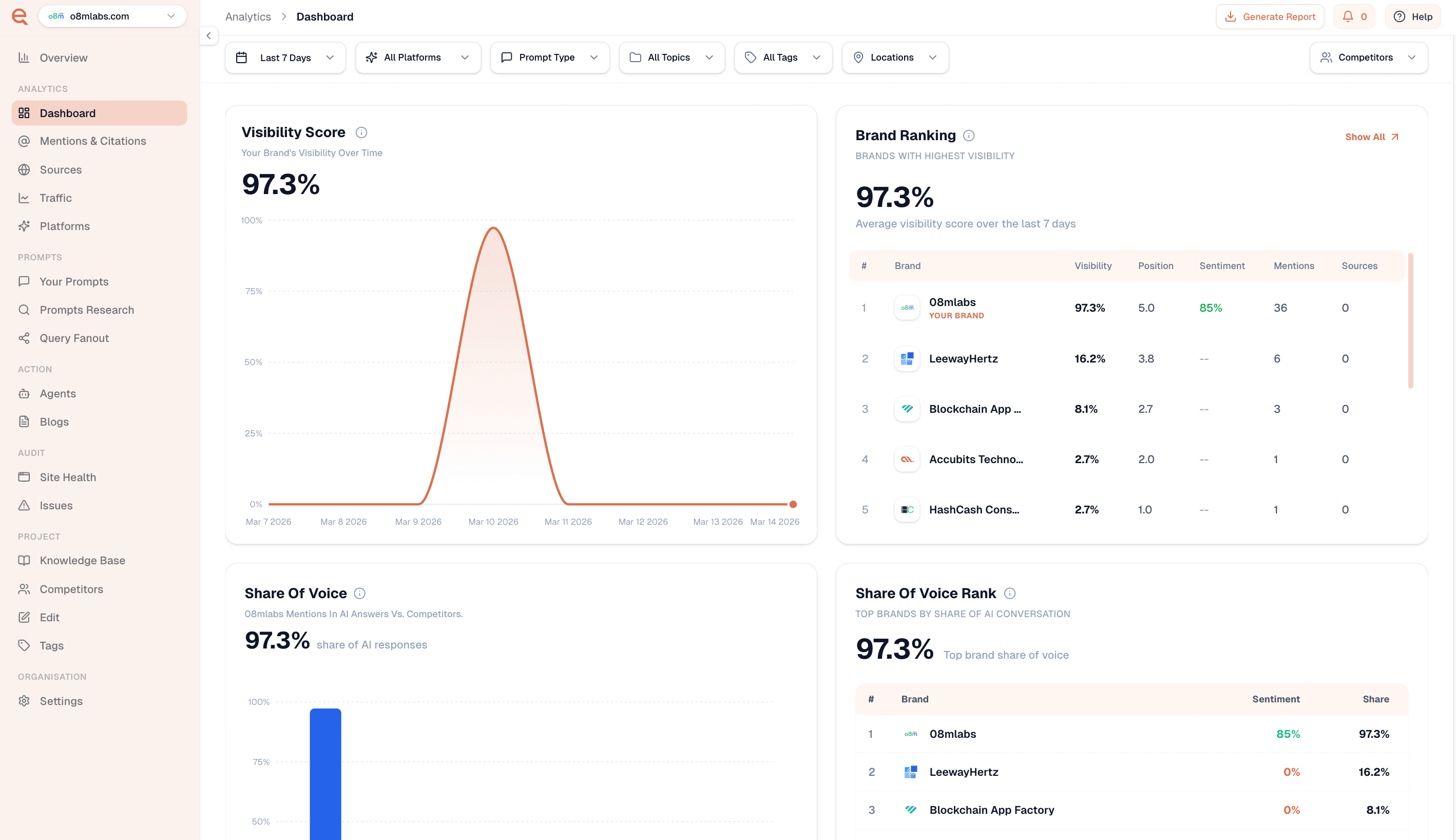

- Four key metrics: Visibility Score, Share of Voice, Average Position, Sentiment

- Track metrics per AI platform — ChatGPT and Perplexity have very different patterns

- Category leaders typically achieve 40%+ Visibility and 20%+ Share of Voice

Why Traditional Metrics Fail in AI Search

In traditional SEO, the goal is Position 1. In AI search, there is no position 1. There is only cited or not cited, first mention or fifth mention, positive framing or neutral framing. The metrics are completely different.

The Four Metrics That Actually Matter

1. Visibility Score

The percentage of tracked prompts where your brand appears in the AI's answer — across all platforms. This is your headline metric.

2. Share of Voice

Of all AI answers in your category that mention any brand, what percentage mention yours? This tells you how you're doing relative to your competitive set.

3. Average Position

When AI mentions your brand alongside competitors, where do you tend to appear? First mention carries significantly more weight than fifth mention.

4. Sentiment Score

AI answers don't just mention brands — they frame them. Tracking sentiment gives you an early warning system for reputation issues.

Setting Benchmarks

- Early stage (0-3 months) — Visibility 5-15%, Share of Voice 3-8%

- Growing (3-12 months) — Visibility 20-35%, Share of Voice 10-18%

- Category leader — Visibility 40%+, Share of Voice 20%+

Frequently Asked Questions

What is Share of Voice in AI search?

Share of Voice measures the percentage of AI answers in your category that mention your brand compared to all brand mentions. It shows your competitive position in AI citations.

How is AI search visibility different from SEO ranking?

SEO ranking is a position on a list. AI visibility measures whether your brand appears in synthesised answers at all, plus context like mention order and sentiment.

Hema Team

Contributor