TL;DR

- AI models expand user queries into 8-15 sub-queries before generating answers

- Brands appearing across multiple fanout queries have higher citation probability

- Fanout queries differ significantly from traditional keyword research data

- Each AI platform has distinct fanout behavior and source weighting

What Actually Happens When You Ask AI a Question

A user types: 'What's the best CRM for a fintech startup?' ChatGPT doesn't just answer that question. It expands it into 8-15 related sub-queries, runs all of them, synthesises the results, and then answers. The brands that appear across those sub-queries win the answer.

This expansion process is called a query fanout. It's the AI equivalent of a researcher pulling out twelve related reference books rather than just one.

Why This Matters More Than Traditional Keyword Research

Traditional keyword research tells you what people type into Google. Query fanout analysis tells you what questions AI is running on their behalf — the background layer of queries that users never see and traditional tools never capture.

How Fanout Queries Differ by Platform

Each AI platform has its own fanout behaviour. Perplexity is aggressive — it generates more sub-queries and surfaces more sources. ChatGPT with browsing is more selective. Gemini incorporates Google's index and tends to favour content that already ranks well in traditional search.

How to Use This in Your Content Strategy

- For any important category query, map out the likely fanout — what related questions would an AI generate?

- Create content that directly answers the fanout queries, not just the original prompt

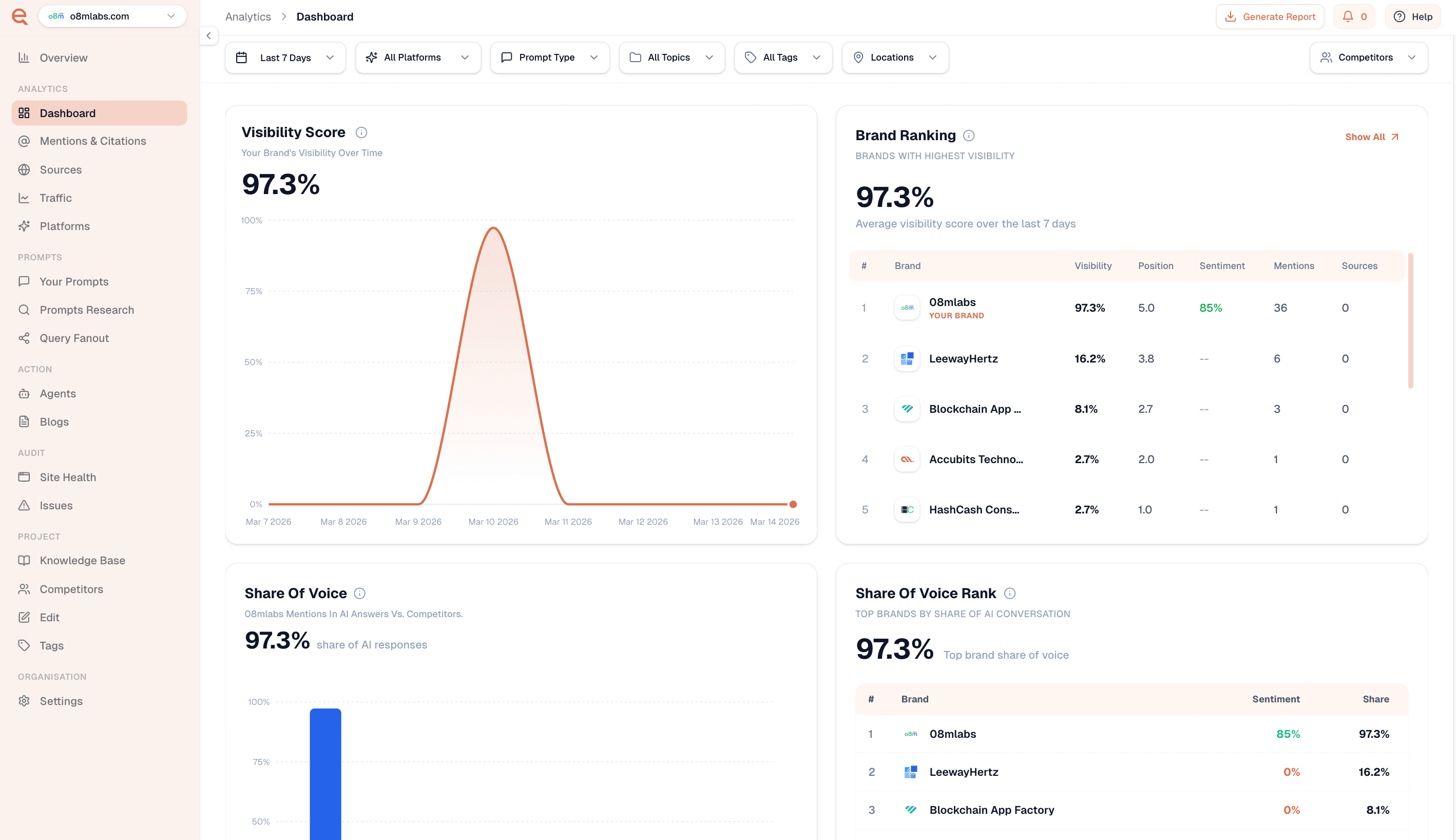

- Identify which fanout queries your competitors are cited for that you are not

- Structure each piece of content around a single, specific question for clean AI extraction

Frequently Asked Questions

What is a query fanout?

A query fanout is the process where AI models expand a single user question into multiple related sub-queries to retrieve comprehensive information before generating an answer.

How do fanout queries differ from keywords?

Traditional keywords reflect what users type. Fanout queries are AI-generated sub-queries users never see — they're longer-tail, more specific, and cover adjacent topics.

Hema Team

Contributor